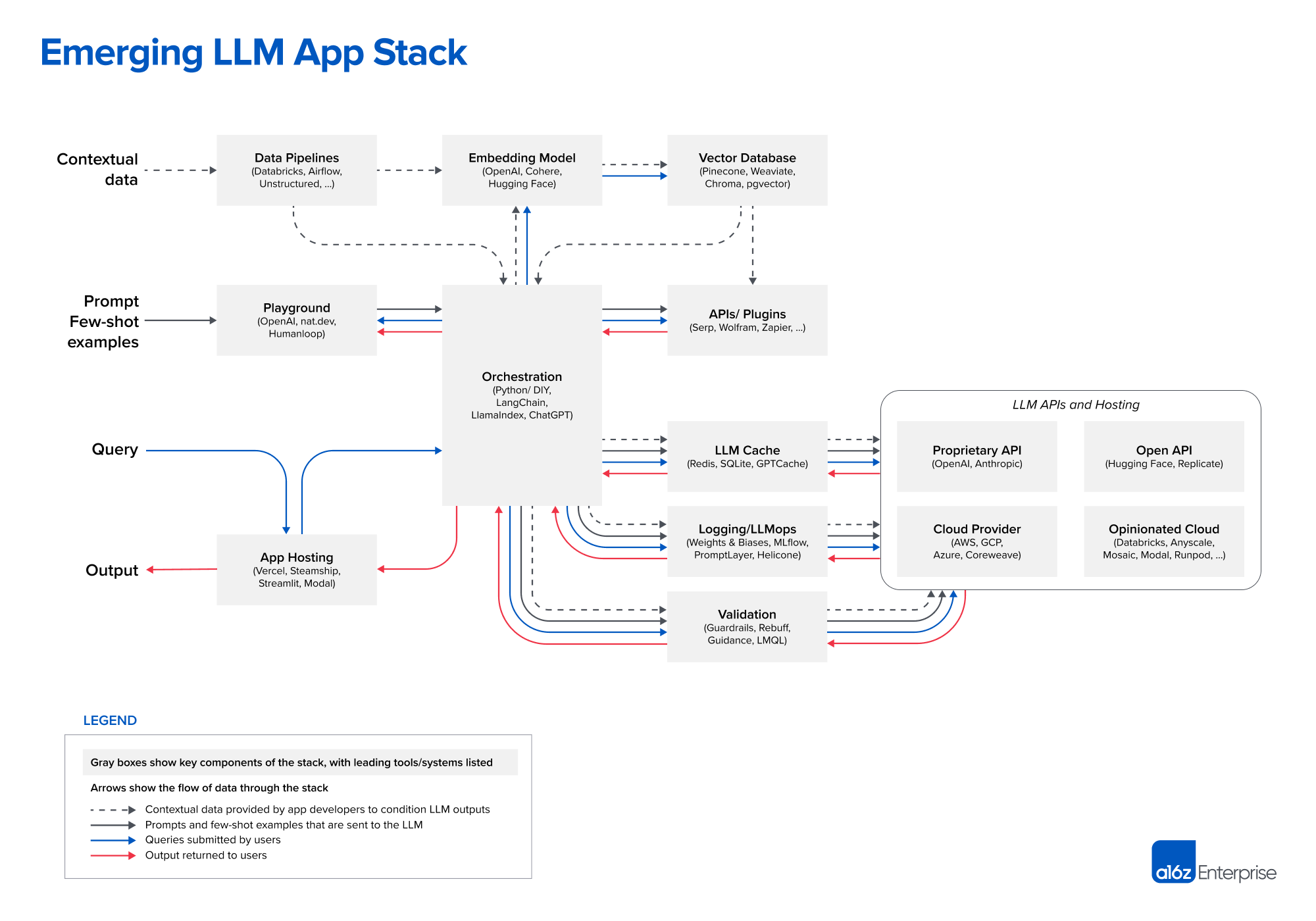

In the world of app development, the model is just a tiny fraction of the entire stack. It's comparable to the role data science used to play. Training a model is only a small part of the end-to-end data science life cycle. There are many other aspects to consider. So, although people tend to think of the model as the application, there's actually a lot more to it.

Recently a16z released a diagram showing the “Emerging Architectures for LLM Applications.” In this episode, we expand on things covered in that diagram to a more general mental model for the new AI app stack. We cover a variety of things from model “middleware” for caching and control to app orchestration.

Leave us a comment

Changelog++ members save 2 minutes on this episode because they made the ads disappear. Join today!

Sponsors:

- Fastly – Our bandwidth partner. Fastly powers fast, secure, and scalable digital experiences. Move beyond your content delivery network to their powerful edge cloud platform. Learn more at fastly.com

- Fly.io – The home of Changelog.com — Deploy your apps and databases close to your users. In minutes you can run your Ruby, Go, Node, Deno, Python, or Elixir app (and databases!) all over the world. No ops required. Learn more at fly.io/changelog and check out the speedrun in their docs.

- Typesense – Lightning fast, globally distributed Search-as-a-Service that runs in memory. You literally can’t get any faster!

- Changelog News – A podcast+newsletter combo that’s brief, entertaining & always on-point. Subscribe today.

Featuring:

Show Notes:

Emerging Architectures for LLM Applications

Something missing or broken? PRs welcome!